A new analysis from Cyrus Shepard argues that AI citations are not replacing traditional SEO signals as much as extending them.

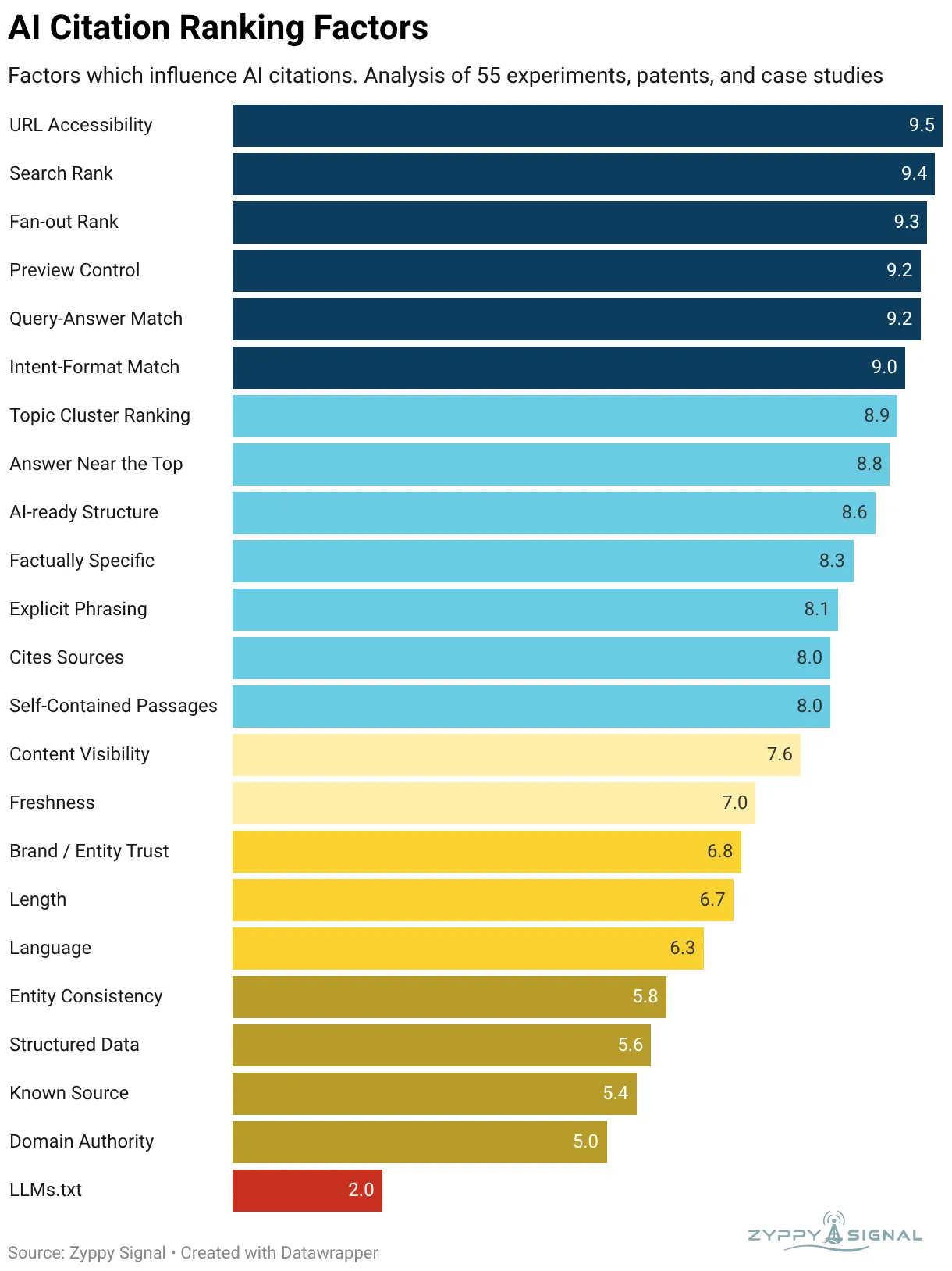

In a new AI Citation Ranking Factors analysis, Shepard reviewed 55 experiments, patents, studies and case studies covering AI citation behavior across systems including ChatGPT, Gemini and Perplexity. The goal was to identify which content characteristics appear most often in pages cited by AI engines.

The main takeaway is simple: winning citations in AI answers does not require throwing away the SEO playbook. The strongest signals still look familiar: crawlability, search visibility, relevance, topical coverage, clear structure and trustworthy sourcing.

What are AI citations?

AI citations are clickable source links that appear inside or alongside AI-generated answers. They are used by systems such as Google AI Overviews, Perplexity, ChatGPT search experiences and other answer engines to support claims and point users toward source material.

For publishers and site owners, citations matter because AI answers can reduce the need for users to click through to the open web. At the same time, being cited can still create visibility and traffic. Shepard cites a Seer Interactive study that found brands cited in Google AI Overviews saw 120% more organic clicks per impression and a 41% increase in paid clicks compared with cases where the brand was not cited.

The strongest factor: accessibility

The highest-scoring factor in Shepard’s analysis is URL accessibility.

That sounds basic, but it may become more important as more sites block AI crawlers, use stricter bot controls or rely on tools that limit scraping. If a page cannot be accessed during training, crawling or grounding, it is less likely to be used as a source.

Shepard’s analysis gives URL accessibility a score of 9.5 out of 10. The practical implication is clear: if publishers want AI systems to cite their pages, they need to understand which bots they are allowing, which they are blocking and whether important content is visible to crawlers.

Traditional search rankings still matter

The second major finding is that traditional search visibility appears strongly connected to AI citations.

Shepard lists search rank as one of the highest-scoring factors, with a score of 9.4. In other words, pages that rank well for the original query are more likely to be cited by AI systems. He points to Ahrefs research finding that 38% of Google AI Overview citations come from URLs already ranking in the top 10 organic results.

This does not mean AI systems only cite top-ranking pages. But it does suggest that organic search strength still gives publishers a meaningful advantage in AI visibility.

That is important because many marketers have treated AI search optimization as a separate discipline. Shepard’s analysis suggests the overlap with traditional SEO is still substantial.

Fan-out queries are becoming more important

One of the more useful concepts in the analysis is fan-out rank.

AI systems often do not answer a query by looking only at the exact wording of the user’s question. They may break the query into related subqueries, supporting questions and adjacent information needs. These are often called fan-out queries.

Shepard gives fan-out rank a score of 9.3. That means a page or site that ranks across related queries may be more likely to earn citations, even if the original prompt is broader or phrased differently.

For SEO teams, this reinforces the value of topical authority. A single page optimized for one keyword is less useful than a cluster of pages that collectively answer the surrounding questions an AI system may need to ground its answer.

AI citations favor clear answers near the top

The analysis also highlights how AI systems retrieve and process content.

Shepard gives “answer near the top” a score of 8.8. The idea is that important information placed high on the page is more likely to be retrieved and cited than information buried deep in long content.

This is not a call to write thin content. It is a reminder that AI systems often work with retrieval limits. If the key answer is hidden below long introductions, generic context or unrelated sections, the passage may never make it into the retrieval window.

For publishers, this means articles should answer the core question early, then expand with nuance, examples, evidence and context.

Structure and extractability matter

Another high-scoring factor is AI-ready structure, which Shepard gives a score of 8.6.

This does not mean every page needs to be rewritten into artificial “chunks.” It means content should be easy to parse. Clear headings, self-contained sections, tables, lists and direct explanations can help AI systems identify what a passage is about and whether it supports a specific answer.

Closely related factors include factually specific content, explicit phrasing and self-contained passages. Shepard’s analysis suggests that AI systems are more likely to cite statements that are specific, complete and easy to quote as evidence.

For example, a vague sentence such as “this method works well for many sites” is less useful than a concrete claim that explains what works, for whom and under what conditions.

Sources may help sources get cited

The analysis also gives “cites sources” a score of 8.

This is one of the more interesting findings. If AI systems are trying to produce answers that can be justified, pages that show their own sources may be more useful as citation targets. This does not mean every sentence needs a footnote. But important claims, statistics and recommendations should be supported by original data, documentation, research or direct evidence.

For publishers, this is a shift toward more transparent editorial work. Showing how a conclusion was reached may become more valuable in AI search than simply stating the conclusion.

Structured data helps, but may not be decisive

Structured data appears in the analysis, but it does not rank near the top.

Shepard gives structured data a score of 5.6. He notes that the evidence is debated, because large language models do not necessarily ingest schema in the same way search engines process structured data. Still, he says studies that looked at schema and AI citations often found a small but consistent positive relationship.

The takeaway is balanced: schema is probably not the magic lever for AI visibility, but clean structured data may still help systems understand entities and page context.

LLMs.txt scores low

One of the weakest factors in the analysis is LLMs.txt.

Shepard gives it a score of 2 and says he could not find credible evidence showing that LLMs.txt files influence AI citations in a meaningful way.

That does not mean the idea is useless forever. But based on the current evidence reviewed in the analysis, LLMs.txt does not appear to be a major citation driver today.

What this means for publishers and SEOs

The practical message is not “forget SEO and optimize for AI.” It is closer to the opposite.

Pages that are accessible, relevant, well-ranked, clearly structured, specific, sourced and part of a broader topic cluster appear better positioned to earn AI citations. Those are not foreign concepts to SEO teams. They are extensions of good search strategy.

The difference is emphasis.

AI search rewards content that can be retrieved, understood and used as evidence. That makes extractability more important. It also makes vague writing, buried answers and unsupported claims more costly.

The Query Post view

The most important part of Shepard’s analysis is its restraint. It does not claim that AI citation optimization is a fully solved discipline. It also does not pretend that every correlation is a confirmed ranking factor.

That distinction matters. AI citations are still a moving target, and different systems may use different retrieval, ranking and grounding methods. Google AI Overviews, ChatGPT, Gemini and Perplexity should not be treated as one identical system.

But the direction is becoming clearer. AI search does not remove the need for SEO fundamentals. It raises the standard for them.

For site owners, the best near-term strategy is straightforward: keep pages crawlable, rank for the query and its related fan-out topics, answer clearly near the top, use specific evidence, cite sources where it matters and make important passages easy to extract.

In other words: win SEO, then make the content easier for AI systems to understand and cite.